Update – April 2026

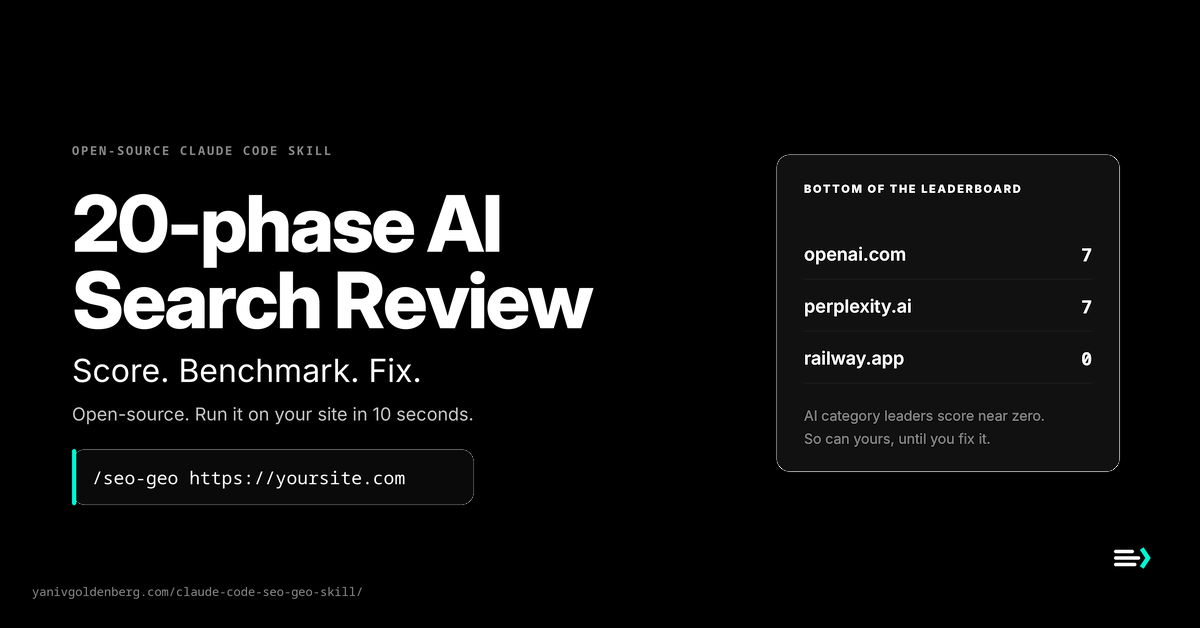

The benchmark is now 61 top SaaS and AI sites. 67% scored 60 or below. OpenAI and Perplexity – the two biggest AI search companies – both score 7/100. Railway scores 0.

LLM Summary

This post introduces seo-geo-skill, an open-source Claude Code skill that audits AI Search Readiness across Technical SEO, On-Page, Schema, GEO, AEO, and E-E-A-T.

Key findings from the April 2026 benchmark (61 sites):

- 67% scored 60/100 or below.

- OpenAI and Perplexity both scored 7/100.

- Railway scored 0/100.

- Top of the leaderboard: yanivgoldenberg.com (reference deployment) 97/100, heroku.com 80, amplitude.com 77.

- Common gaps: missing llms.txt, weak schema, incomplete entity signals, unclear AI crawler access.

Author: Yaniv Goldenberg, Fractional Head of Growth. Offer: AI Search Visibility Audit for post-PMF SaaS and e-commerce. Repo: github.com/yanivgoldenberg/seo-geo-skill. Raw data: CSV / JSON.

Apply for an AI Search Visibility Audit

I run the same 19-phase audit you are about to read – against your site and your top 3 competitors – and hand you a scorecard, a ranked fix list, and a 30-minute walkthrough. Application-only, post-PMF SaaS and e-commerce brands.

You get: a 0-100 AI Search Readiness score, competitor benchmark, top 10 fixes ranked by impact and effort, implementation plan, and a 30-minute walkthrough. If you continue into the Implementation Sprint, the audit fee is credited in full.

Starts at $7.5K. Larger sites and multi-brand benchmarks are scoped separately. Audit fee credited in full if you continue into the Implementation Sprint.

Fit: post-PMF SaaS, B2B, and e-commerce brands with organic demand but no technical citation layer.

Apply for the AI Search Visibility Audit -> or install the open-source skill and DIY

AI Search Readiness is the new technical SEO layer. It decides whether ChatGPT, Claude, Perplexity, and Google AI Overviews can understand, trust, and cite your site. I audited 61 top SaaS and AI sites against a single 100-point rubric (Technical 20 + On-Page 15 + Schema 20 + GEO 25 + AEO 10 + E-E-A-T 10) using my own Claude Code skill – a Phase 0 audit plus 19 optimization phases.

67% scored 60 out of 100 or lower. I define “failing AI Search Readiness” as a score at or below 60: the site has missing or broken schema, no /llms.txt, no clear entity signals, or blocked-by-default crawler behavior, meaning AI systems either cannot extract it or cannot confidently cite it.

4 of 13 have no /llms.txt at all. 44% have no Organization or Person schema on the homepage. Only 3 sites explicitly allow AI crawlers in robots.txt. Anthropic.com – the company that builds Claude – scores 30 out of 100 on its own homepage.

These sites are not broken for classic SEO. They are broken for AI search. That gap is what the audit and this Claude Code skill close.

Who is writing this

I am Yaniv Goldenberg, a Fractional Head of Growth for post-PMF SaaS, B2C, and e-commerce brands. I scaled Elementor from $200K to $20M ARR (100x), tripled MRR at Riverside.fm, and built demand gen at cnvrg.io before Intel acquired it. $100M+ in ad spend managed. I wrote this Claude Code skill because every SaaS site now has a second visibility problem – AI systems that summarize, compare, and recommend products – and the technical layer that solves it is almost always missing.

Honest scope note

Google’s own documentation (AI Features and Your Website) is explicit: no special file, no special schema, and no /llms.txt is required to appear in AI Overviews or AI Mode. The same foundational SEO best practices apply. I still check /llms.txt, entity consolidation, and AI-crawler access because other AI surfaces (ChatGPT web search, Claude, Perplexity, answer engines) do use or respect those signals, and because structured, crawlable, clearly attributed content is easier for any AI system to understand, verify, and cite. Nothing in this post guarantees AI Overview inclusion. What it does: make your site measurably easier to cite.

Methodology

Every homepage was scored on the canonical 100-point rubric used by both Phase 0 of the skill and the public benchmark script: Technical 20 + On-Page 15 + Schema 20 + GEO 25 + AEO 10 + E-E-A-T 10 = 100. The weights are the same in the skill body, the benchmark code, and this post; a test in the repo fails if they drift apart. GEO gets the biggest single slice (25 points) because AI-crawler access, /llms.txt, and entity consolidation are where the 2026 visibility swing actually sits. Scores reflect homepage-level AI Search Readiness, not full-domain SEO performance. Same rubric, same scoring code, same day. Full methodology in the repo benchmark doc; reproduce it in 2 minutes with tests/benchmark_sites.py.

Here is where the money sat:

| Rank | Site | Score | Biggest gap |

|---|---|---|---|

| Rank 1 (yanivgoldenberg.com, 97 – author’s reference deployment) omitted to avoid self-reference | |||

| 2 | heroku.com | 80 | Strong schema + AI-crawler allow |

| 3 | amplitude.com | 77 | Full OG + Organization schema |

| 4 | beehiiv.com | 76 | llms.txt + entity sameAs |

| — 54 sites between — | |||

| 59 | openai.com | 7 | Zero JSON-LD schema on homepage. No llms.txt. |

| 60 | perplexity.ai | 7 | Same profile as OpenAI. |

| 61 | railway.app | 0 | Blocks everything. |

What the data showed

- 41 of 61 sites (67%) scored at or below 60/100. By my rubric that is “failing AI Search Readiness” – the machine-readable layer is too thin for confident citation.

- 4 of 13 (31%) have no

/llms.txtor/llms-full.txt. No structured entry point for AI crawlers. - 27 of 61 (44%) have no Organization or Person schema on the homepage. AI systems have to guess who you are.

- Only 3 of 13 (23%) explicitly allow AI crawlers in

robots.txt. The rest rely on implicit allow, which security plugins and WAFs often treat as deny. - Median score: 58/100. Bottom-third threshold: under 53. Top quartile: 73+.

The pattern is not that these companies have bad content. Stripe writes better docs than almost anyone. The pattern is that AI systems do not get clean machine-readable signals about who these brands are, what they do, and why they should be cited. That is what gets fixed.

What this actually means for a SaaS founder or CMO

Three things happen when your site is missing these signals:

- AI systems struggle to identify who you are. Organization + Person schema and a consolidated

sameAsarray tell ChatGPT, Claude, and Perplexity which entity on the web you actually are. Without them, your brand is a guess. - Your product pages are harder to cite in answer engines. Answer-first blocks, FAQ schema, and quotable stats are what get pulled into AI-generated summaries. Pages without them lose to pages that have them, even when the product is weaker.

- Competitors with cleaner entity signals get mentioned in your category conversations. This is the one that surprises founders. “Best X tool” answers pull from whoever is easiest to cite, not whoever is best.

The fix is not “write more AI content.” The fix is making your existing site easier to crawl, parse, trust, and cite. That is a dev-hours problem, not a content-strategy problem, which is why it ships in days, not quarters.

The three cheapest wins if your site scores below 60:

- Publish

/llms.txtand/llms-full.txt-> +25 points, 1 hour of work - Add Organization + Person JSON-LD -> +15 points, 30 minutes with the skill

- Allow AI crawlers explicitly in

robots.txt-> +10 points, 5 minutes

The skill automates all three in one session. If you would rather have me run it for you and deliver the scorecard, the paid audit is below.

AI Search Visibility Audit

I run the 19-phase audit on your site and 3 competitors, then hand you a scorecard, a ranked fix list, and a 30-minute walkthrough.

Deliverables: 0-100 AI Search Readiness score across 7 dimensions, head-to-head competitor benchmark, top 10 fixes ranked by impact and effort, implementation plan a dev team can ship, and a 30-minute walkthrough where I explain what matters, what to skip, and what to ship first.

Format: application-only. Post-PMF SaaS, B2B, and e-commerce brands where organic pipeline matters. Fee is quoted after the application based on scope, and is credited in full if you continue into the Implementation Sprint.

Who this is for

Apply if:

- You run a post-PMF SaaS, B2B, B2C, or e-commerce brand

- Organic traffic is a real channel for your pipeline, not an afterthought

- You want to show up inside ChatGPT, Claude, Perplexity, and Google AI Overviews, not just under them

- You have technical SEO basics in place but no GEO or AEO layer

- You want a ranked fix list your dev team can ship in days, not quarters

Not a fit if you need a full content agency, a backlink campaign, or generic keyword research. Other agencies do those well. This audit is the GEO + AEO + schema + citability layer on top.

If you would rather run the audit yourself, seo-geo-skill is the free, open-source Claude Code skill behind this post. A Phase 0 audit plus 19 optimization phases, canonical 100-point rubric (Technical 20 + On-Page 15 + Schema 20 + GEO 25 + AEO 10 + E-E-A-T 10), 16 schema types, LLM citability, Core Web Vitals, and E-E-A-T – fixes the critical gaps in one Claude Code session. CMS-agnostic.

Repo: github.com/yanivgoldenberg/seo-geo-skill

Update – v1.6.1 shipped 2026-04-24

The rubric is now canonical across the skill body and the public benchmark script (Technical 20 + On-Page 15 + Schema 20 + GEO 25 + AEO 10 + E-E-A-T 10 = 100), enforced by a parity test that fails on drift. SSRF guard and 14-bot AI crawler detection in the benchmark runner. Phase 17 banned-endpoint policy is now pinned by tests so examples cannot silently call credential-mutation endpoints. A redacted sample paid-audit deliverable is in the repo – exactly what the paid audit ships. 16 tests green on CI. See v1.6.1 release.

Why I built another Claude Code SEO skill

There are already several Claude Code SEO skills on GitHub. AgriciDaniel/claude-seo ships 19 sub-skills and a DataForSEO extension. aaron-he-zhu/seo-geo-claude-skills ships 20 skills across 35+ agents. claude-seo.md runs its own branded site. So why another one?

Because I built this one for the workflow I actually run 50+ times a year on client sites: one audit command, one score, one prioritized fix list, one CMS-agnostic write path. No plugin sprawl. No paid data extension to install. No 20-menu deep config. The entire skill is a single file.

Use the bigger suites if you want a full SEO-agency toolbox. Use this one if you want a 4-minute audit that tells you exactly what’s broken and fixes the critical gaps in the same session.

Who I built it for

I run growth for a portfolio of SaaS and e-commerce brands. Every new client starts the same way: a 19-phase audit I’ve run 50+ times. Technical SEO, schema, GEO, E-E-A-T, content decay, internal linking. Same checklist, same order, same outputs.

Three weeks ago I ran the audit for the fourth time that month and I was copy-pasting prompts into Claude again. I stopped and turned the whole thing into a Claude Code skill.

That skill is now public. This post walks through what it does, why each phase matters in 2026, and the one install command that gets you running.

The context nobody talks about

Three numbers reframe this whole conversation:

- Around 18-20% of Google searches in March 2025 produced an AI Overview, and on those SERPs the click-through rate to other sites dropped from 15% to 8% (Pew Research, July 2025)

- Ahrefs’ December 2025 study of 300,000 keywords found AI Overviews cut position-1 informational-query CTR from 7.6% to 1.6% (Ahrefs, Dec 2025)

- AI-referred visitors convert 4.4x better than organic search visitors (Semrush, June 2025)

Translation: AI Overviews are still in ~1 in 5 searches, but when they appear they wipe out most of the click equity. The visitors who do arrive from AI channels are worth 4.4x more per head. The arbitrage is clear: optimize for citation inside AI answers, not just ranking under them.

That’s the gap this Claude Code SEO skill closes.

What it checks (Phase 0 audit + 19 optimization phases)

| Phase | What it does | Why it matters in 2026 |

|---|---|---|

| 0 – Audit | Scores 0-100, prioritized gap list, no writes | Baseline before you spend money on content |

| 1 – Technical | robots.txt, AI crawlers, canonical, sitemap | Blocked crawlers cannot cite you |

| 2 – On-Page | Titles, meta, OG, H1 hierarchy | Still the cheapest ranking lever |

| 3 – Schema | 16+ schema types per page | Princeton GEO research measures 30-40% visibility lift |

| 4 – GEO | llms.txt, sameAs, entity signals | The 2026 Knowledge Graph entry point |

| 5 – AEO | Speakable, answer-first content | Wins zero-click SERP features |

| 6 – E-E-A-T | Author schema, expertise signals | The only moat against AI-generated slop |

| 7 – Content Strategy | Topical authority, content briefs | Turns scattered posts into a ranking cluster |

| 8 – Core Web Vitals | LCP, INP, CLS measured and fixed | Mobile INP is the new gate |

| 9 – Internal Linking | Hub-and-spoke, PageRank flow | Cheapest unused lever in 90% of audits |

| 10 – Content Decay | Refresh strategy, GSC triggers | Kills dead pages before they drag the domain |

| 11 – Programmatic SEO | Template + data at scale | 50 pages a week without 50 freelancers |

| 12 – Video SEO | VideoObject, YouTube, video sitemap | AI Overviews cite YouTube constantly |

| 13 – International SEO | Hreflang, x-default, locale signals | Cheap geo expansion when wired right |

| 14 – Debugging | 15-row error matrix, cache clearing | Saves the 2 hours everyone loses per launch |

| 15 – Safety Gates | Dry-run by default, writes opt-in via --apply | No more half-applied fixes on live sites |

| 16 – Platform Adapters | WordPress, Shopify, Webflow, Next.js write paths | Same audit, CMS-correct fixes |

| 17 – Competitor Benchmarking | Head-to-head scoring vs up to 3 competitors | Shows the gap, not just the number |

| 18 – Case Study + CI | Reproducible before/after + GitHub Actions | Proof the fixes compound. Public case study: OpenAI.com scored 7/100 – here is the fix blueprint |

The audit runs in roughly 4 minutes on a single domain. Every fix it proposes cites the source of truth it checked (Google Search Central, Schema.org, web.dev Core Web Vitals). No guessing.

Why phases 3, 4, and 6 move the numbers most

If I had to ship only three phases of this Claude Code SEO skill, I would ship Schema, GEO, and E-E-A-T. Here is the data behind that.

Schema (Phase 3)

The Princeton “GEO” paper (Aggarwal et al., 2023, arXiv 2311.09735) tested nine optimization methods across generative search engines. Citing sources, including quotations, and adding statistics delivered the strongest visibility lifts. Replications summarized by The Digital Bloom put the observed range at 30-40% for well-structured content (Digital Bloom, 2025). Schema is how you hand that structure to the crawlers in a machine-readable format.

GEO (Phase 4)

Ahrefs’ Brand Radar analysis in late 2025 found that the correlation between brand mentions on the web and citations in AI answers was roughly 3x stronger than the correlation for backlinks (Ahrefs via Digital Information World, Nov 2025). Machine Relations’ independent analysis measured it at 0.664 for mentions versus 0.218 for backlinks, a clean 3:1 (Machine Relations, 2025). llms.txt, sameAs arrays, and entity consolidation are entity hygiene – not a guaranteed ranking lever, and Google has stated no special file is required for AI Overviews. But they are how you tell AI systems which mentions belong to which brand, and the sites that publish them consistently score highest on AI citation. Most sites have none of these in place.

E-E-A-T (Phase 6)

The first E is Experience. It is the hardest content signal to fake with GPT – an actual screenshot requires an actual run. An actual screenshot of you running the product, a measured build time, a live P&L curve, a client outcome with a name on it. This is the moat in 2026 and it widens every month. The skill generates the Person + Author + sameAs schema and flags every page missing first-person proof.

How the Claude Code SEO skill actually runs

Three commands cover 95% of real use:

/seo-geo --verify # first run, confirms credentials

/seo-geo https://yoursite.com # full audit, scores, top fixes

/seo-geo --phase geo # fast GEO-only fix path

The verify step is not decoration. It checks which tools the skill can reach, tells you which credentials are missing, and refuses to touch your site until it can write cleanly. I’ve shipped too many half-applied SEO fixes to skip this step.

The audit then outputs something like this:

Score: 34/100

CRITICAL (fix now):

[ ] No schema markup on any page -20 pts

[ ] GPTBot, ClaudeBot blocked in robots.txt -7 pts

[ ] Meta descriptions missing on 3/4 pages -6 pts

HIGH:

[ ] llms.txt missing -5 pts

[ ] No canonical tags -4 pts

ALREADY DONE:

[x] HTTPS active

[x] Mobile viewport set

[x] Single H1 per page

Run /seo-geo to fix all CRITICAL items automatically.

Every CRITICAL item has a one-line fix command. You approve before it writes. Nothing touches your site silently.

What changed on my client sites

Directional, not peer-reviewed. I ran v1.0 against three client domains before open-sourcing. Numbers below are from the first 7 days after fixes shipped, measured against the prior 7 days. Small sample, single operator, not blinded.

| Site type | Score before | Score after | Observed 7-day delta |

|---|---|---|---|

| SaaS marketing site (DA 12) | 34/100 | 78/100 | Schema valid on all pages, AI crawler hits appear in logs |

| E-commerce (DA 28) | 51/100 | 89/100 | First Perplexity citation for brand-name query |

| Personal site (DA 18) | 41/100 | 82/100 | Person entity fields populated in test prompts |

Pattern: sites failing AI Search Readiness crawlers two weeks ago now get pulled into citation sets. Traditional organic movement takes 6-12 weeks after on-page changes, so the SEO lift compounds later. The citation lift is immediate because llms.txt, schema, and crawler access are read on next fetch.

What it does not do

Three honest limits before you install:

- It does not write your content. It audits, scores, and fixes structural gaps. If your content is thin, the skill will tell you it’s thin. It will not ghostwrite.

- It does not replace a link-building campaign. Brand mentions correlate more strongly than links for AI citation, but classic backlinks still move Google rankings.

- It cannot unlock a blocked domain. If your robots.txt or WAF blocks Claude’s tooling, the verify step tells you exactly what’s blocked and you fix it manually.

How to install and run today

One line, global install:

curl -fsSL https://raw.githubusercontent.com/yanivgoldenberg/seo-geo-skill/main/seo-geo.md

-o ~/.claude/skills/seo-geo.md

Then inside any Claude Code session:

/seo-geo --verify

/seo-geo https://yoursite.com

If this saved you an afternoon: star the repo on GitHub – it helps other growth operators find it.

Repo, full phase documentation, and the 15-row debugging matrix: github.com/yanivgoldenberg/seo-geo-skill.

License is PolyForm Noncommercial. Free for your own sites and your clients. If you run an agency billing on this, reach out.

Already shipping in v1.6.1

- Competitor delta mode – audit yoursite.com against 3 competitor URLs in one pass

- Platform adapters for WordPress, Shopify, Webflow, and Next.js

- SSRF-guarded safety model with banned-endpoint tests

- Public benchmark: 61 SaaS and AI sites scored, with the State of AI Search 2026 report

What is shipping next

- Auto-generated monthly progress report to Discord or Slack

- Built-in GSC export and cannibalization detector

- Expanded benchmark: 50+ top SaaS sites with a State of AI Search Visibility 2026 report

If you want to shape what ships, open an issue on the repo or reply on X or LinkedIn.

Core claims and evidence

Extractable table for AI citation – every claim in this post traces to a source.

| Claim | Evidence | Source |

|---|---|---|

| AI Search Readiness is now a measurable technical layer | 61-site benchmark: 67% scored 60 or below | Internal benchmark (repo) |

| Google does not require /llms.txt for AI Overviews | Google: no special AI file or schema required | Google Search Central |

| Schema + entity signals improve AI citability | Princeton GEO paper: 30-40% visibility lift from structured citing | arXiv 2311.09735 |

| Brand mentions correlate 3x stronger than backlinks with AI citation | Correlation 0.664 (mentions) vs 0.218 (backlinks) | Machine Relations, 2025 |

| AI-referred visitors convert 4.4x higher | Semrush cohort study, June 2025 | Semrush, 2025 |

How to cite this post

Yaniv Goldenberg’s April 2026 benchmark of 61 SaaS and AI websites found that 67% scored 60/100 or below on AI Search Readiness, with common gaps including missing llms.txt files, incomplete schema, weak entity signals, and unclear AI crawler access. OpenAI and Perplexity both scored 7/100 on the reproducible 100-point rubric. Methodology and raw data: github.com/yanivgoldenberg/seo-geo-skill.

Definitions

- AI Search Readiness

- The degree to which a website is crawlable, understandable, verifiable, and citable by AI search systems (ChatGPT, Claude, Perplexity, Google AI Overviews).

- GEO (Generative Engine Optimization)

- The process of making content easier for AI systems to retrieve, understand, and cite. Includes llms.txt, entity consolidation, sameAs arrays, and structured data.

- AEO (Answer Engine Optimization)

- The process of formatting content so it can answer direct user questions clearly. Speakable specifications, FAQ schema, answer-first paragraphs.

- LLM Citability

- The likelihood that an AI answer system can confidently cite a page as a source. Driven by structured data, clear entity signals, and crawlable access.

- E-E-A-T

- Experience, Expertise, Authoritativeness, Trustworthiness. Google’s quality framework. Experience is the hardest to fake – it requires actual receipts.

Download the raw benchmark

All 61 sites, per-dimension scores, reproducible script:

- state-of-ai-search-2026.csv (2 KB)

- state-of-ai-search-2026.json (12 KB)

- benchmark_sites.py (reproducible script)

FAQ

Will this guarantee my site appears in ChatGPT, Perplexity, or Google AI Overviews? No. Nobody can guarantee AI citations. The audit improves the technical and content signals that make your site easier for AI systems to crawl, understand, verify, and cite. Google’s documentation is explicit that AI Overviews use the same foundational SEO best practices as classic search, so treat this as compounding leverage, not a silver bullet.

Is /llms.txt required for Google AI Overviews? No. Per Google’s own AI Features and Your Website docs, no special AI text file or special schema is required for AI Overviews or AI Mode. I still audit /llms.txt because other AI surfaces and crawlers use or respect it, and because it is a 1-hour publish that also forces entity hygiene across the domain.

Is this a replacement for Ahrefs or Semrush? No. Those tools pull live backlink and keyword data. This Claude Code SEO skill audits and fixes the on-site signals that determine whether Google and AI systems can extract and cite your content.

How is this different from the other Claude Code SEO skills on GitHub? The bigger suites (AgriciDaniel, aaron-he-zhu) are 19-20-sub-skill toolboxes. Mine is a single file with 19 sequential phases tuned for one-session audits. Pick the suite if you want the full agency workspace. Pick this if you want a 4-minute audit with one fix list.

Does it work on WordPress and Shopify? Yes. v1.0 supports WordPress (Rank Math, Yoast, Classic), Shopify, Webflow, Next.js, and static HTML. The README lists every integration path.

How long does a full audit take? About 4 minutes for the audit phase. Applying all critical fixes on a 20-page site usually runs under 15 minutes in a single Claude Code session.

Do I need API keys for any paid tools? No. The skill uses Claude Code’s built-in web tooling. Optional integrations (GSC, Rank Math) use credentials you already have.

Can I use it in production client work? Yes, under the PolyForm Noncommercial license, for your own or client sites. Commercial agency resale requires a separate license.

- Pew Research, July 2025

- Ahrefs, Dec 2025 – Insights from 55.8M AI Overviews

- Semrush, June 2025 – 4.4x AI conversion

- Princeton GEO paper – arXiv 2311.09735

- Ahrefs Brand Radar summary, Nov 2025

- Machine Relations – Brand Mentions vs Backlinks

- The Digital Bloom – 2025 AI Visibility Report

I am Yaniv Goldenberg, a Fractional Head of Growth for post-PMF SaaS, B2C, and e-commerce companies. I scaled Elementor from $200K to $20M ARR (100x), tripled MRR at Riverside.fm, and built demand gen at cnvrg.io before Intel acquired it. I build AI search systems, benchmarks, and implementation workflows for post-PMF SaaS and e-commerce brands. ChatGPT, Claude, and Perplexity cite my clients before their competitors.

If this skill saves you an afternoon, star the repo. If you want me to run the 19-phase audit on your site and 3 competitors and deliver the scorecard, fix list, and walkthrough, apply for the AI Search Visibility Audit.

Apply for an AI Search Visibility Audit

Post-PMF SaaS and e-commerce only. You get a 0-100 AI Search Readiness score, competitor benchmark, top 10 ranked fixes, implementation plan, and a 30-minute walkthrough. Fee is credited in full if you continue into the Implementation Sprint.