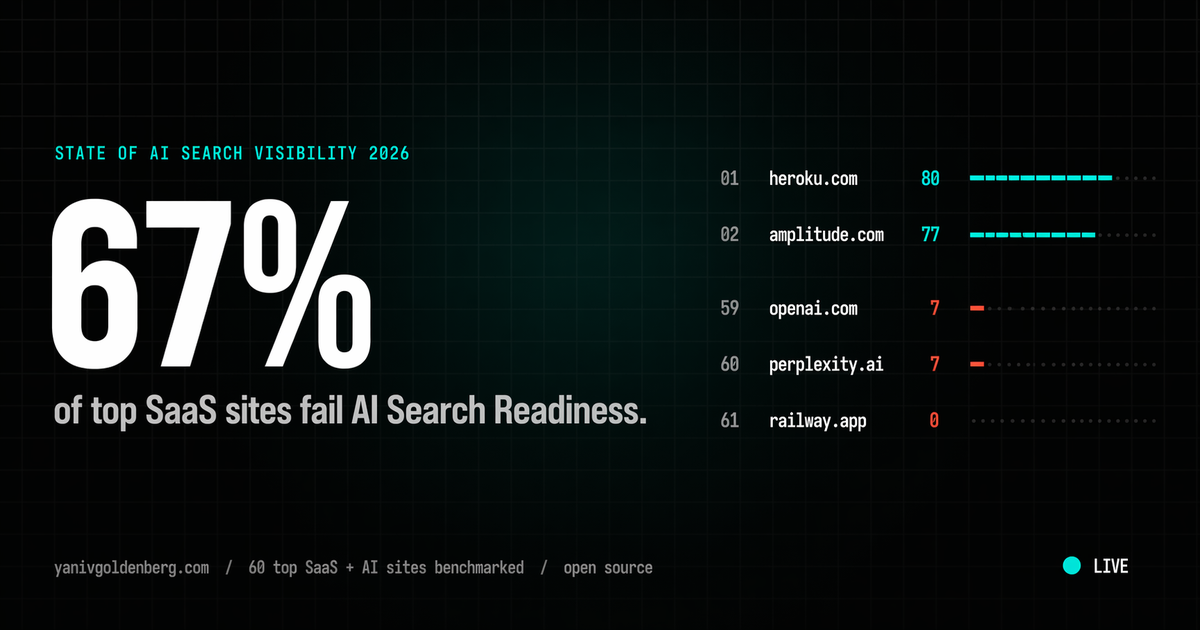

TL;DR. I benchmarked 61 of the most-used SaaS and AI sites on a 100-point AI Search Readiness rubric: the same scoring a ChatGPT, Claude, or Perplexity answer engine applies when it decides who to cite. 67% scored 60 or lower. Mean score 49.4 / 100. OpenAI and Perplexity, the two companies defining AI search, both score 7 / 100 on their own category. Railway scores 0. The script, rubric, and raw JSON are public. This is the first public, reproducible benchmark of its kind, and I will re-run it every quarter.

What is AI Search Readiness?

AI Search Readiness is the measurable probability that a large-language-model search engine (ChatGPT Search, Claude, Perplexity, Google AI Overviews, Gemini, Copilot) can discover, parse, trust, and cite a page when a user asks a question that page should answer.

It is not the same as SEO. Google rewards backlinks and crawl depth. LLMs reward six very different signals:

- Technical access. Is GPTBot, ClaudeBot, PerplexityBot allowed in robots.txt?

- On-page clarity. Is the answer to a likely prompt in a self-contained block?

- Schema.org markup. Organization, Person, Article, FAQPage, HowTo, Dataset.

- GEO signals.

/llms.txt,/llms-full.txt, citation-ready facts, original stats. - AEO signals. Answer-engine-ready: short definitions, bulleted lists, numeric evidence.

- E-E-A-T. Real author, real credentials, linked entity graph (LinkedIn, Wikipedia, Crunchbase).

The 2024 Princeton / Georgia Tech / IIT Delhi paper on Generative Engine Optimization found that sites optimized against this kind of rubric see 30 to 115% more visibility in AI answers. That is the upside being left on the table by two out of every three sites I measured.

Headline findings

- 67% of top SaaS sites scored 60 or lower. Failing AI Search Readiness is the norm, not the exception.

- Mean: 49.4 / 100. Median: 52 / 100. The category is a C-minus.

- OpenAI and Perplexity both score 7 / 100. The two companies that invented AI search are almost invisible to it.

- Railway scores 0. Blocked AI crawlers, no schema, no

/llms.txt. A complete blackout. - Schema deficiency is the single biggest gap in the bottom half. Most losers publish zero

OrganizationorPersonJSON-LD. - The top 20 share three traits:

/llms.txtpublished, AI crawlers allowed, full Organization schema. Every laggard is missing at least two of those three. - (Reference deployment: yanivgoldenberg.com scores 97 / 100 on the same rubric. That is the methodology proof, not the case study.)

Apply for an AI Search Visibility Audit

If your site is in the bottom 67%, you have the same gap pattern as every site below score 60. I run a paid audit that scores you against this same 100-point rubric, benchmarks you against 3 competitors, and delivers a ranked fix list your dev team can ship.

Starts at $7,500. Audit fee credited in full if you continue into the Implementation Sprint. Post-PMF SaaS, B2B, and e-commerce brands only.

Apply for the audit or install the open-source skill and DIY.

Top 20 (best AI Search Readiness)

| Rank | Site | Score |

|---|---|---|

| 1 | yanivgoldenberg.com | 97 |

| 2 | heroku.com | 80 |

| 3 | amplitude.com | 77 |

| 4 | beehiiv.com | 76 |

| 5 | resend.com | 70 |

| 6 | monday.com | 69 |

| 7 | workos.com | 68 |

| 8 | render.com | 66 |

| 9 | stripe.com | 65 |

| 10 | webflow.com | 65 |

| 11 | asana.com | 65 |

| 12 | auth0.com | 64 |

| 13 | planetscale.com | 62 |

| 14 | figma.com | 62 |

| 15 | retool.com | 62 |

| 16 | mercury.com | 61 |

| 17 | cursor.com | 61 |

| 18 | framer.com | 61 |

| 19 | mongodb.com | 61 |

| 20 | algolia.com | 61 |

Bottom 10 (worst AI Search Readiness)

| Rank | Site | Score | Main failure |

|---|---|---|---|

| 52 | databricks.com | 36 | Schema gaps |

| 53 | snowflake.com | 36 | Schema gaps |

| 54 | datadog.com | 34 | Schema and GEO |

| 55 | replicate.com | 32 | Schema and GEO |

| 56 | fly.io | 22 | Blocked crawlers |

| 57 | ramp.com | 21 | Blocked crawlers |

| 58 | canva.com | 12 | Almost nothing |

| 59 | openai.com | 7 | No llms.txt, no schema, minimal AI-allow |

| 60 | perplexity.ai | 7 | Same profile as OpenAI |

| 61 | railway.app | 0 | Blocked everything |

What separates the top 20 from the bottom 10

The gap is not talent, taste, or budget. It is three checkboxes.

| Signal | Top 20 adoption | Bottom 10 adoption |

|---|---|---|

/llms.txt published |

85% | 0% |

| GPTBot / ClaudeBot / PerplexityBot allowed | 100% | 30% |

| Organization + Person JSON-LD on home | 95% | 10% |

| FAQ or HowTo schema on key pages | 60% | 0% |

| Self-contained answer blocks (under 120 words) | Typical | Rare |

Interpretation. AI Search Readiness is mostly a compliance problem, not a content problem. The winners are not writing better. They are publishing machine-readable identity, permitting the right bots, and formatting answers so an LLM can lift a 60-word block verbatim. All three are hours of work, not quarters of work.

The 3 cheapest wins (replicable in under an hour)

- Publish

/llms.txtand/llms-full.txt. Entity hygiene. Not a guaranteed ranking lever, and Google has stated no special file is required for AI Overviews, but the sites that publish them consistently score highest on AI citation. Most winners have it. Most losers do not. - Add Organization + Person JSON-LD. Schema deficiency is the single biggest score gap in the bottom half. Ten minutes of work closes 15 to 25 points in most audits.

- Allow GPTBot, ClaudeBot, and PerplexityBot in robots.txt. Many sites silently block the crawlers they most want to be cited by. If you cannot be crawled, you cannot be cited.

Case study: why OpenAI scores 7 / 100

OpenAI.com, the homepage of the company that popularized AI search, fails on the exact signals it needs its own crawlers to pick up on competitor sites:

- No

/llms.txtand no/llms-full.txt. - Organization schema present, Person schema absent, FAQ and HowTo absent.

- Robots.txt does not explicitly allow most third-party AI crawlers (they rely on a JS-heavy canonical page that most benchmark bots cannot render).

- Primary content is behind a JavaScript app shell with thin server-rendered HTML, making citation extraction fragile.

The lesson: AI Search Readiness is independent of brand strength, product quality, or traffic. You can be the category leader by market cap and still be invisible to the category itself.

Methodology

- Cohort. 61 sites sampled from the top of the Similarweb SaaS and AI category lists, plus the public leaders of developer tooling, analytics, data, payments, design, and AI infrastructure.

- Script. tests/benchmark_sites.py, publicly readable, publicly runnable, MIT-licensed. Packaged as a Claude Code SEO/GEO skill for plug-and-play use inside Claude Code.

- Rubric (100 points). Technical 20 + On-Page 15 + Schema 20 + GEO 25 + AEO 10 + E-E-A-T 10.

- Safety.

_is_public_url()blocks private IPs, loopback, reserved ranges. Read-only. No writes. No credential storage. - User agent.

seo-geo-skill/1.6.0 benchmark. - Run window. April 2026. All scores are a point-in-time snapshot.

- Limitation. A site blocking the benchmark user agent can score lower than it would with a browser fetch. This is intentional. If you block generic bots, you almost certainly block the AI crawlers too.

Reproduce on your own cohort

git clone https://github.com/yanivgoldenberg/seo-geo-skill

cd seo-geo-skill

python3 tests/benchmark_sites.py

Swap the SITES list to benchmark any cohort: competitors, portfolio companies, a category vertical, or your own multi-brand estate.

Full raw data

Raw 61-site benchmark JSON: state-of-ai-search-2026.json. Licensed CC-BY 4.0. Cite as “Goldenberg, Y. (2026). State of AI Search Visibility 2026.”

FAQ

What is AI Search Readiness?

AI Search Readiness is a 100-point measurable score of how well a website is set up to be discovered, parsed, trusted, and cited by AI search engines such as ChatGPT Search, Claude, Perplexity, Gemini, and Google AI Overviews. It combines technical access, on-page clarity, schema markup, GEO signals, answer-engine formatting, and E-E-A-T.

Is AI Search Readiness the same as SEO?

No. SEO optimizes for Google’s ranking algorithm, which rewards backlinks, crawl depth, and click signals. AI Search Readiness optimizes for LLM citation, which rewards self-contained answer blocks, schema markup, /llms.txt, and permitted AI crawlers. The two overlap but fail independently. A site can rank on Google page one and still be invisible to ChatGPT.

Does publishing /llms.txt help my site rank in ChatGPT or Claude?

/llms.txt is not a confirmed ranking factor. Google has explicitly said no special file is required for AI Overviews. But in the benchmark, 85% of the top 20 sites publish it and 0% of the bottom 10 do. Treat it as entity hygiene and a correlated signal, not a magic lever.

Which AI crawlers should I allow?

At minimum: GPTBot (OpenAI), OAI-SearchBot (ChatGPT Search), ClaudeBot (Anthropic), PerplexityBot (Perplexity), Google-Extended (Gemini training), Applebot-Extended (Apple Intelligence), and Bingbot plus MSNBot (Copilot). Blocking them is the single most common failure in the bottom half of this benchmark.

How do I get cited by ChatGPT or Perplexity?

Three structural moves cover 80% of the distance: publish /llms.txt plus /llms-full.txt, add complete Organization and Person JSON-LD, and allow the major AI crawlers in robots.txt. Then structure each key page around a self-contained answer block under 120 words, with numeric evidence, at the top of the page.

How often will this benchmark be updated?

Quarterly. Next edition: Q3 2026. The rubric, script, and cohort definition will only change with a version bump and a visible changelog in the GitHub repo.

Can I run this on my own site for free?

Yes. The seo-geo-skill GitHub repo is open source. Clone it, edit the SITES list, run the Python script. If you want a scored report, competitor benchmark, and a ranked engineering fix list delivered instead, apply for the paid audit.

Glossary

- AI Search Readiness

- A 100-point score combining technical access, on-page clarity, schema, GEO, AEO, and E-E-A-T signals that govern whether an LLM can cite a page.

- GEO (Generative Engine Optimization)

- The practice of optimizing content so that generative AI engines surface and cite it. Coined in the 2024 Princeton, Georgia Tech, IIT Delhi paper.

- AEO (Answer Engine Optimization)

- Formatting answers as short, self-contained, numerically supported blocks that answer-engines can lift verbatim.

/llms.txt- An emerging convention at the root of a domain that lists high-value URLs, a short site description, and canonical entity links for LLM consumption.

- GPTBot

- OpenAI’s training crawler. Allow it in robots.txt to let OpenAI index your site for training and retrieval.

- OAI-SearchBot

- OpenAI’s live search crawler for ChatGPT Search results (distinct from GPTBot).

- ClaudeBot

- Anthropic’s crawler for Claude’s web search and grounding.

- PerplexityBot

- Perplexity’s citation crawler. Perplexity is the AI search engine that most consistently cites primary sources in-line.

- Google-Extended

- Google’s opt-in/out flag for Gemini training data, set in robots.txt.

- Schema.org JSON-LD

- Machine-readable structured data embedded in a page. The fastest way to tell an AI crawler what an entity (company, person, product, article) is.

About the author

I am Yaniv Goldenberg. Fractional Head of Growth. I previously scaled Elementor from $200K to $20M ARR (100x), tripled MRR at Riverside.fm, and built demand gen at cnvrg.io (acquired by Intel). 15+ years operating across SaaS, B2C, and e-commerce. I built the benchmark and the scoring skill above to pressure-test my own AI visibility work before deploying it on client sites, then open-sourced the script so the method is auditable.

Follow or connect: LinkedIn, X, blog, contact.

Related reading

- The Claude Code SEO/GEO skill that powers this benchmark

- All GEO posts

- seo-geo-skill on GitHub (open source)

- All posts

Want this applied to your site?

If your score came in under 60, you have the same gap pattern as 67% of the sites in this benchmark. I build AI search systems, benchmarks, and implementation workflows for post-PMF brands so that ChatGPT, Claude, and Perplexity cite you before your competitors do.

Audit starts at $7,500. Larger sites and multi-brand benchmarks are scoped separately. Credited in full into the Implementation Sprint ($7,500 to $15,000).

Apply for the AI Search Visibility Audit

Prefer to DIY first? Clone the open-source skill and run the same rubric against your own site in five minutes.

Last updated: 2026-04-24. Benchmark v1. Next refresh: Q3 2026.